一.Docker consul集群搭建

1.linux上面部署consul集群

2.Docker上面部署consul集群

(1).Dokcer Consul 参数详解

docker参数,使得docker容器越过了net namespace的隔离,免去手动指定端口映射的步骤

127.0.0.1–bind的,默认是0.0.0.0

(2).启动第一个节点consul1

官网:https://hub.docker.com/_/consul,需要先下载consul, 这里以192.168.31.241这台服务器演示:

[root@manager_241 ~]# docker pull consul

Using default tag: latest

Error response from daemon: manifest for consul:latest not found: manifest unknown: manifest unknown[root@manager_241 ~]# docker pull consul:1.14.1

1.14.1: Pulling from library/consul

9621f1afde84: Pull complete

...

92968d126abf: Pull complete

Digest: sha256:d8f44192b5c1df18df4e7cebe5b849e005eae2dea24574f64a60a2abd24a310e

Status: Downloaded newer image for consul:1.14.1

docker.io/library/consul:1.14.1

[root@manager_241 ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

gowebimg v1.0.1 be3c1ee42ce2 2 days ago 237MB

mycentos v1 4ba38cf3943b 3 days ago 434MB

nginx latest a6bd71f48f68 3 days ago 187MB

6053537/portainer-ce latest b9c565f94ccc 4 weeks ago 322MB

mysql latest a3b6608898d6 4 weeks ago 596MB

consul 1.14.1 8540a77af6e2 12 months ago 149MB启动第一个节点consul1(创建一个consul服务/容器):

docker run --name consul1 -d -p 8500:8500 -p 8300:8300 -p 8301:8301 -p 8302:8302 -p 8600:8600 consul agent -server -bootstrap-expect=3 -ui -bind=0.0.0.0 -client=0.0.0.0 或者 docker run --name consul1 -d -p 8500:8500 consul agent -server -bootstrapexpect=3 -ui -bind=0.0.0.0 -client=0.0.0.0—name consul1 指定启动的consul容器名字为consul1,

-bootstrap-expect=3 启动的consul容器数量

具体命令如下:

[root@worker_241 ~]# docker pull consul

Using default tag: latest

Error response from daemon: manifest for consul:latest not found: manifest unknown: manifest unknown

[root@worker_241 ~]# 发现不能下载consul,这是因为没有latest版本的consul,需要指定consul具体版本下载,这里search查看一下:

[root@worker_241 ~]# docker search consul

NAME DESCRIPTION STARS OFFICIAL AUTOMATED

consul Consul is a datacenter runtime that provides… 1427 [OK]

hashicorp/consul-template Consul Template is a template renderer, noti… 29

hashicorp/consul Automatic build of consul based on the curre… 53 [OK][root@worker_241 ~]# docker pull hashicorp/consul

Using default tag: latest

latest: Pulling from hashicorp/consul

96526aa774ef: Pull complete

8a755a53c1aa: Pull complete

fd305fe2d878: Pull complete

01d12fe0b370: Pull complete

cbc103c13062: Pull complete

4f4fb700ef54: Pull complete

3a5b5f5fe822: Pull complete

Digest: sha256:712fe02d2f847b6a28f4834f3dd4095edb50f9eee136621575a1e837334aaf09

Status: Downloaded newer image for hashicorp/consul:latest

docker.io/hashicorp/consul:latest

[root@worker_241 ~]#

[root@worker_241 ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

gowebimg v1.0.1 be3c1ee42ce2 4 days ago 237MB

nginx <none> a6bd71f48f68 5 days ago 187MB

hashicorp/consul latest 48de899edccb 3 weeks ago 206MB

mysql latest a3b6608898d6 4 weeks ago 596MB创建并启动consul1

[root@worker_241 ~]# docker run --name consul1 -d -p 8500:8500 -p 8300:8300 -p 8301:8301 -p 8302:8302 -p 8600:8600 hashicorp/consul agent -server -bootstrap-expect=3 -ui -bind=0.0.0.0 -client=0.0.0.0

2550fb171015d39dccad2b62379259337ee78d074536fd6d6e3383c12c71b113

[root@worker_241 ~]#

[root@worker_241 ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

2550fb171015 hashicorp/consul "docker-entrypoint.s…" 26 seconds ago Up 14 seconds 0.0.0.0:8300-8302->8300-8302/tcp, :::8300-8302->8300-8302/tcp, 8301-8302/udp, 0.0.0.0:8500->8500/tcp, :::8500->8500/tcp, 0.0.0.0:8600->8600/tcp, :::8600->8600/tcp, 8600/udp consul1[root@worker_241 ~]# docker network ls

NETWORK ID NAME DRIVER SCOPE

66d69474d739 bridge bridge local

c2191211eabb docker_gwbridge bridge local

31616bf730aa host host local

1e0116a4c2da none null local

[root@worker_241 ~]# docker inspect 66d69474d739

[

{

"Name": "bridge",

"Id": "66d69474d739b7833552f10f0d7c2cc204fd89874fbb9b322bdb6ccf8f8e88cd",

"Created": "2023-11-25T20:06:24.898621409-08:00",

"Scope": "local",

"Driver": "bridge",

"EnableIPv6": false,

"IPAM": {

"Driver": "default",

"Options": null,

"Config": [

{

"Subnet": "172.17.0.0/16",

"Gateway": "172.17.0.1"

}

]

},

"Internal": false,

"Attachable": false,

"Ingress": false,

"ConfigFrom": {

"Network": ""

},

"ConfigOnly": false,

"Containers": {

"2550fb171015d39dccad2b62379259337ee78d074536fd6d6e3383c12c71b113": {

"Name": "consul1",

"EndpointID": "870bf26516364ba1fecfcab8e41188c9366c53d4a774bdd69704ccfbe63a9a61",

"MacAddress": "02:42:ac:11:00:02",

"IPv4Address": "172.17.0.2/16",

"IPv6Address": ""

}

},

"Options": {

"com.docker.network.bridge.default_bridge": "true",

"com.docker.network.bridge.enable_icc": "true",

"com.docker.network.bridge.enable_ip_masquerade": "true",

"com.docker.network.bridge.host_binding_ipv4": "0.0.0.0",

"com.docker.network.bridge.name": "docker0",

"com.docker.network.driver.mtu": "1500"

},

"Labels": {}

}

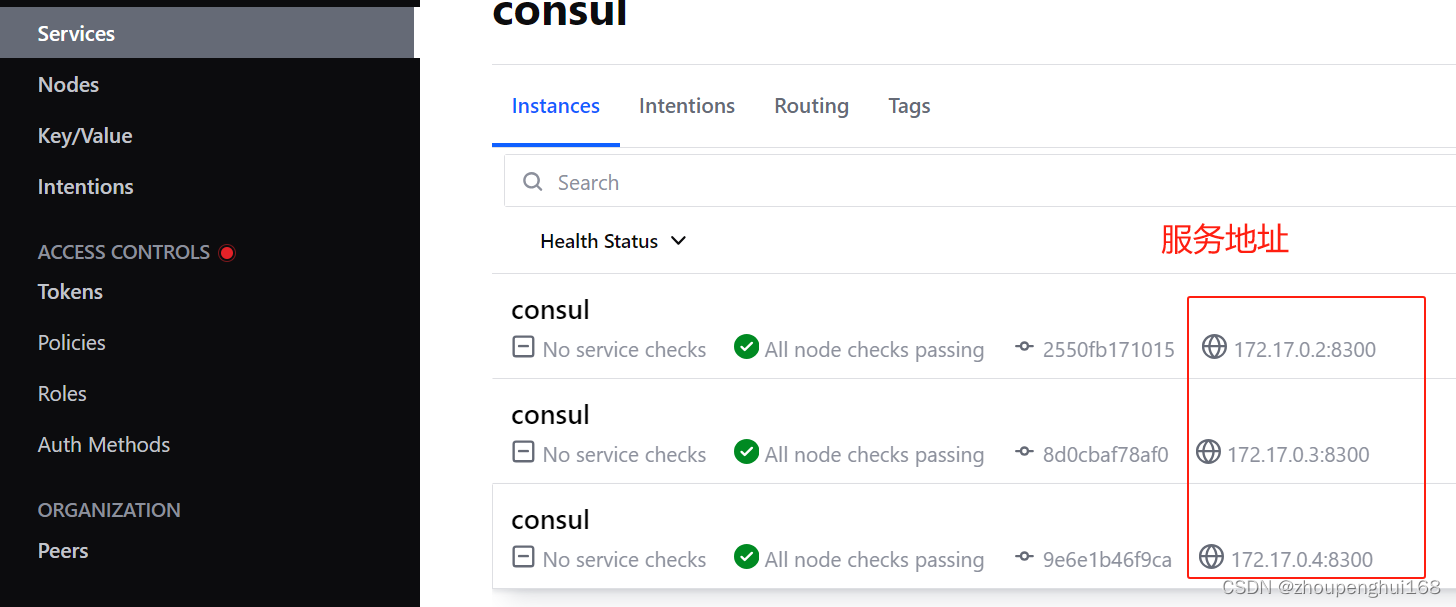

]发现consul1的ip地址为:172.17.0.2

(3).启动第二个节点(端口8501),加入到 consul1

启动命令和linux搭建consul集群命令一致, –join 该ip为第一个个consul1节点ip地址(172.17.0.2)

docker run --name consul2 -d -p 8501:8500 hashicorp/consul agent -server -ui -bootstrap-expect=3 -bind=0.0.0.0 -client=0.0.0.0 -join 172.17.0.2[root@worker_241 ~]# docker run --name consul2 -d -p 8501:8500 hashicorp/consul agent -server -ui -bootstrap-expect=3 -bind=0.0.0.0 -client=0.0.0.0 -join 172.17.0.2

8d0cbaf78af0be8a8e81e95ccf63508b814dafee83870048ee74120f75e9bc09(3).启动第三个节点(端口8502),加入到 consul1

启动命令和linux搭建consul集群命令一致

docker run --name consul3 -d -p 8502:8500 hashicorp/consul agent -server -ui -bootstrap-expect=3 -bind=0.0.0.0 -client=0.0.0.0 -join 172.17.0.2[root@worker_241 ~]# docker run --name consul3 -d -p 8502:8500 hashicorp/consul agent -server -ui -bootstrap-expect=3 -bind=0.0.0.0 -client=0.0.0.0 -join 172.17.0.2

9e6e1b46f9caa2f8675c0c56b9e68fc7bca6c341f28b5f4ff12ba53a431e7463(4).启动一个consule客户端(端口 8503 )加入到consul1

docker run --name consulClient1 -d -p 8503:8500 hashicorp/consul agent -ui -bind=0.0.0.0 -client=0.0.0.0 -join 172.17.0.2[root@worker_241 ~]# docker run --name consulClient1 -d -p 8503:8500 hashicorp/consul agent -ui -bind=0.0.0.0 -client=0.0.0.0 -join 172.17.0.2

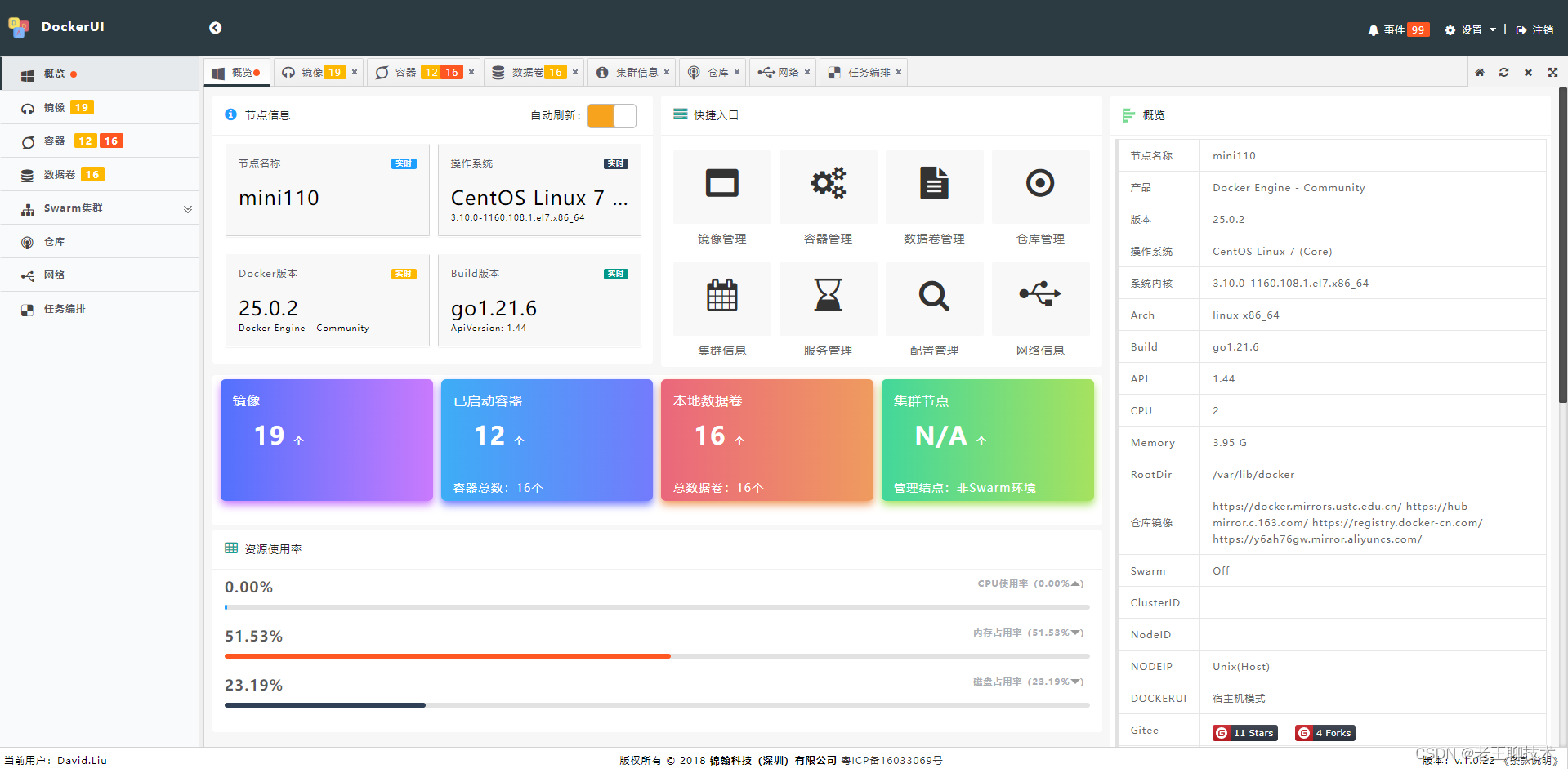

44d7066b26dae726309e1c21a7bde4b31099258e728d175bbef7cb1dc7e40398(5).查看consul

docker ps

docker exec -it consul1 consul members[root@worker_241 ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

44d7066b26da hashicorp/consul "docker-entrypoint.s…" 2 minutes ago Up 2 minutes 8300-8302/tcp, 8301-8302/udp, 8600/tcp, 8600/udp, 0.0.0.0:8503->8500/tcp, :::8503->8500/tcp consulClient1

9e6e1b46f9ca hashicorp/consul "docker-entrypoint.s…" 3 minutes ago Up 3 minutes 8300-8302/tcp, 8301-8302/udp, 8600/tcp, 8600/udp, 0.0.0.0:8502->8500/tcp, :::8502->8500/tcp consul3

8d0cbaf78af0 hashicorp/consul "docker-entrypoint.s…" 4 minutes ago Up 4 minutes 8300-8302/tcp, 8301-8302/udp, 8600/tcp, 8600/udp, 0.0.0.0:8501->8500/tcp, :::8501->8500/tcp consul2

2550fb171015 hashicorp/consul "docker-entrypoint.s…" 18 minutes ago Up 18 minutes 0.0.0.0:8300-8302->8300-8302/tcp, :::8300-8302->8300-8302/tcp, 8301-8302/udp, 0.0.0.0:8500->8500/tcp, :::8500->8500/tcp, 0.0.0.0:8600->8600/tcp, :::8600->8600/tcp, 8600/udp consul1

好了,consul集群就搭建好了,这是在一台机器上搭建,如果并发量不是很大的微服务,完全可以在一台机器上操作(好处:通过bridge桥接方式,容器间可以相互通信,默认是在一个consul集群中,共享同一个网络),当然,如果并发量比较大,就需要把consul部署到多台服务器上,下面就来在多台机器上部署consul

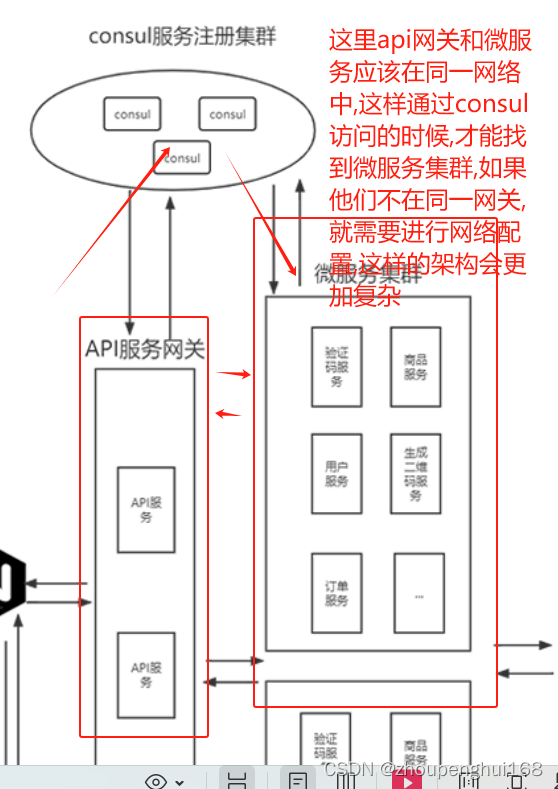

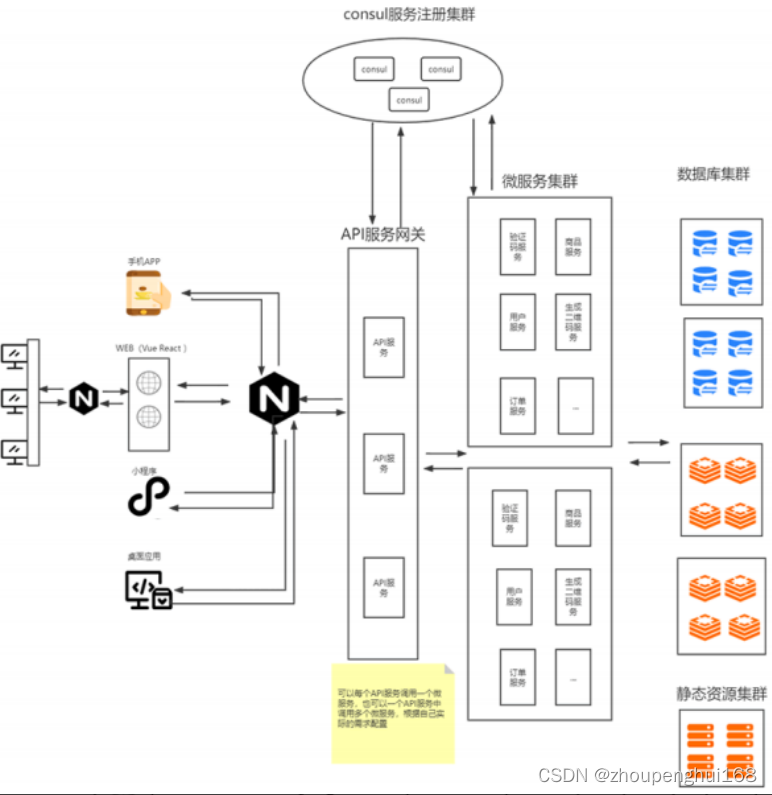

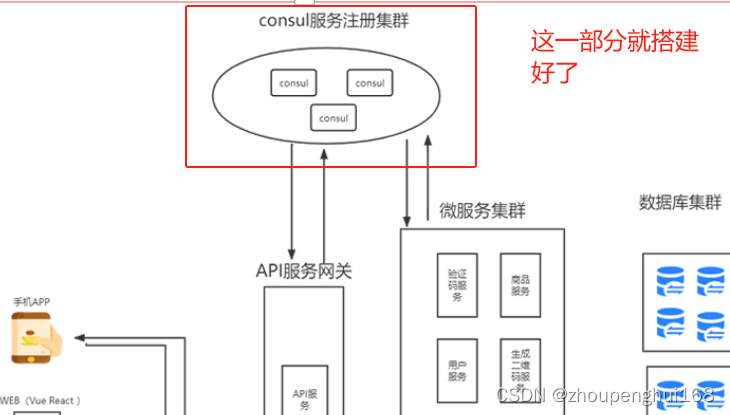

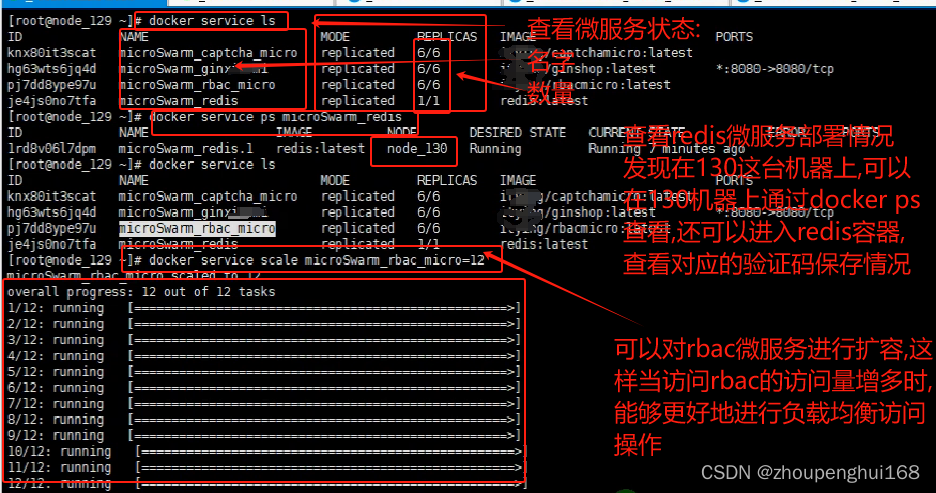

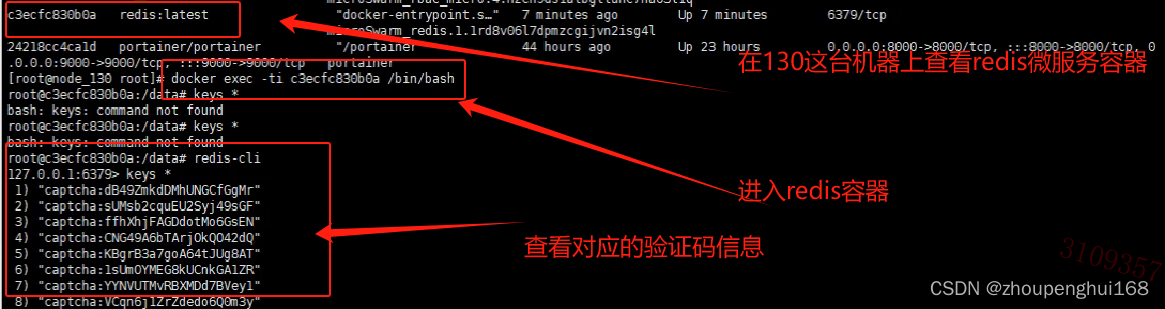

二.Consul集群+Swarm集群部署微服务实战

1.在多台服务器上部署consul

(1).linux上面部署consul集群

2.通过docker部署搭建Consul集群

可以通过docker run –net=host共享Network方式(共享物理机ip地址)来搭建,这样在物理机上运行consul,就不用暴露端口了,通过物理机ip相互通信,这里以192.168.31.117这台机器为例,命令如下:

docker run --net=host -e CONSUL_BIND_INTERFACE=ens33 -h=192.168.31.117 --name consul1 -v /consul_server/data:/consul/data consul agent -server -bootstrap-expect=3 -ui -bind=192.168.31.117 -client=0.0.0.0–net=host 共享Network方式(共享物理机ip地址)

CONSUL_BIND_INTERFACE=ens33 绑定的网卡

-h 192.168.31.117 物理机ip

–name consul1 consul容器名称

-v 映射的数据卷

/consul_server/data:/consul/data 把当前data数据目录映射到consul/data中

consul 从consul镜像启动容器(也可以从自己下载的其他consul镜像启动,一般为consul官方镜像启动)

agent -server 启动一个server服务端服务

其他参数和上面讲解的一致

nohup docker run --net=host -e CONSUL_BIND_INTERFACE=ens33 -h=192.168.31.117 --name consul1 -v /consul_server/data:/consul/data hashicorp/consul agent -server -bootstrap-expect=3 -ui -bind=192.168.31.117 -client=0.0.0.0 &这样在192.168.31.117这台机器上就启动了一个consul容器服务了,它共享物理机ip,绑定了网卡,这样就可以通过ip访问这个consul容器

具体命令如下:

[root@worker_117 ~]# docker pull hashicorp/consul

Using default tag: latest

latest: Pulling from hashicorp/consul

96526aa774ef: Pull complete

...

Digest: sha256:712fe02d2f847b6a28f4834f3dd4095edb50f9eee136621575a1e837334aaf09

Status: Downloaded newer image for hashicorp/consul:latest

docker.io/hashicorp/consul:latest

[root@worker_117 ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

hashicorp/consul latest 48de899edccb 3 weeks ago 206MB

[root@worker_117 ~]# nohup docker run --net=host -e CONSUL_BIND_INTERFACE=ens33 -h=192.168.31.117 --name consul1 -v /consul_server/data:/consul/data hashicorp/consul agent -server -bootstrap-expect=3 -ui -bind=192.168.31.117 -client=0.0.0.0 &

[root@worker_117 ~]#

[root@worker_117 ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

d66621ff05c1 hashicorp/consul "docker-entrypoint.s…" 11 seconds ago Up 9 seconds consul1这样就搭建好了一个consul,下面在其他几台机器上搭建consul,并加入192.168.31.117这台consul集群中:

nohup docker run --net=host -e CONSUL_BIND_INTERFACE=ens33 -h=192.168.31.140 --name consul2 -v /consul_server/data:/consul/data hashicorp/consul agent -server -bootstrap-expect=3 -ui -bind=192.168.31.140 -client=0.0.0.0 -join 192.168.31.117 &

nohup docker run --net=host -e CONSUL_BIND_INTERFACE=ens33 -h=192.168.31.81 --name consul3 -v /consul_server/data:/consul/data hashicorp/consul agent -server -bootstrap-expect=3 -ui -bind=192.168.31.81 -client=0.0.0.0 -join 192.168.31.117 &

nohup docker run --net=host -e CONSUL_BIND_INTERFACE=ens33 -h=192.168.31.241 --name consul4 -v /consul_server/data:/consul/data hashicorp/consul agent -bind=192.168.31.241 -client=0.0.0.0 -join 192.168.31.117 &192.168.31.140加入consul1集群

[root@worker_140 ~]# nohup docker run --net=host -e CONSUL_BIND_INTERFACE=ens33 -h=192.168.31.140 --name consul2 -v /consul_server/data:/consul/data hashicorp/consul agent -server -bootstrap-expect=3 -ui -bind=192.168.31.140 -client=0.0.0.0 -join 192.168.31.117 &

[1] 10150

[root@worker_140 ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

1e54ad577bce hashicorp/consul "docker-entrypoint.s…" 11 seconds ago Up 10 seconds consul2192.168.31.81加入consul1集群

[root@manager_81 ~]# nohup docker run --net=host -e CONSUL_BIND_INTERFACE=ens33 -h=192.168.31.81 --name consul3 -v /consul_server/data:/consul/data hashicorp/consul agent -server -bootstrap-expect=3 -ui -bind=192.168.31.81 -client=0.0.0.0 -join 192.168.31.117 &

[root@manager_81 ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

fbf11320c18e hashicorp/consul "docker-entrypoint.s…" 2 minutes ago Up 2 minutes consul3[root@worker_241 ~]# nohup docker run --net=host -e CONSUL_BIND_INTERFACE=ens33 -h=192.168.31.241 --name consul4 -v /consul_server/data:/consul/data hashicorp/consul agent -bind=192.168.31.241 -client=0.0.0.0 -join 192.168.31.117 &

[1] 17681

[root@worker_241 ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

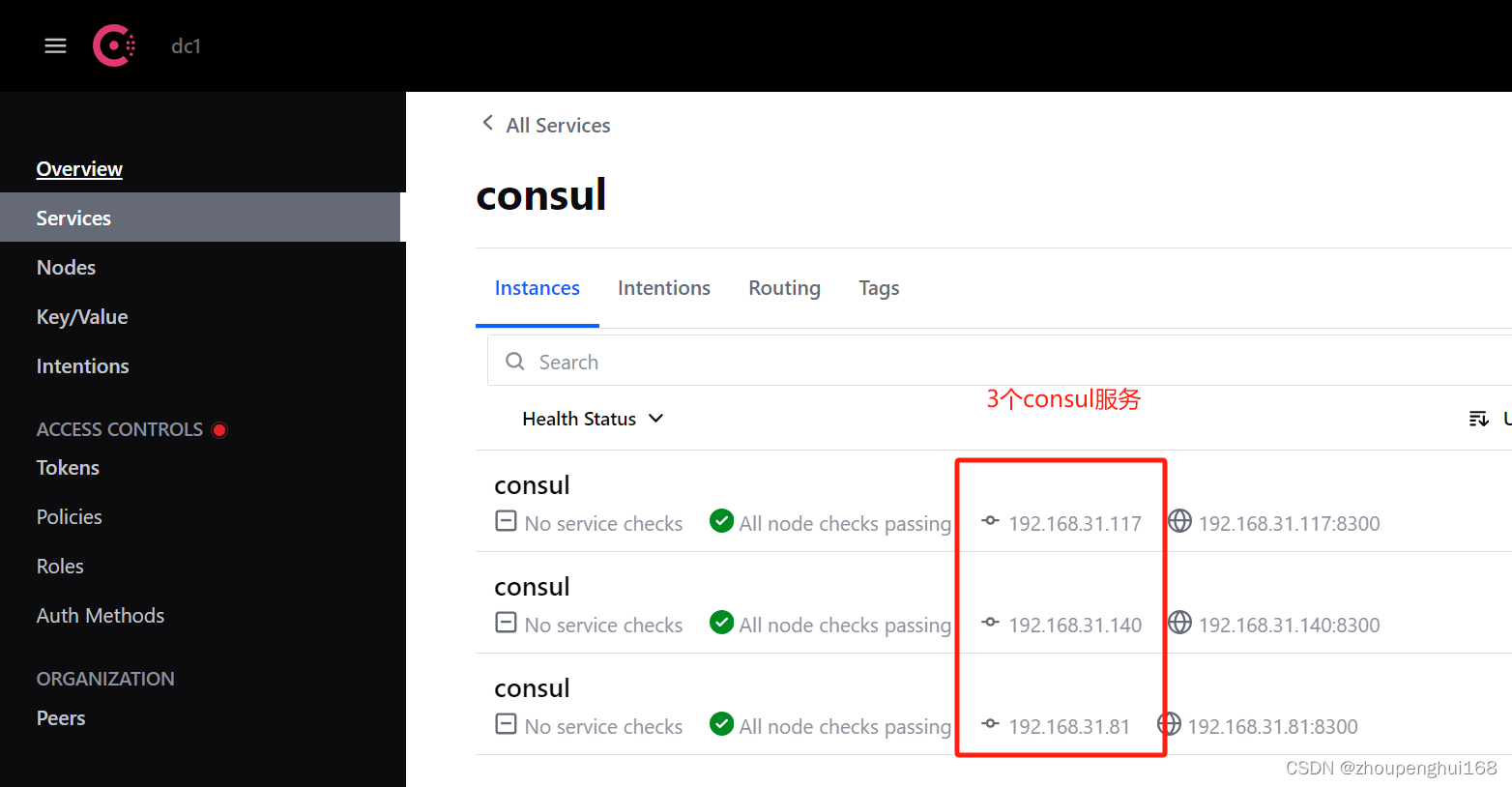

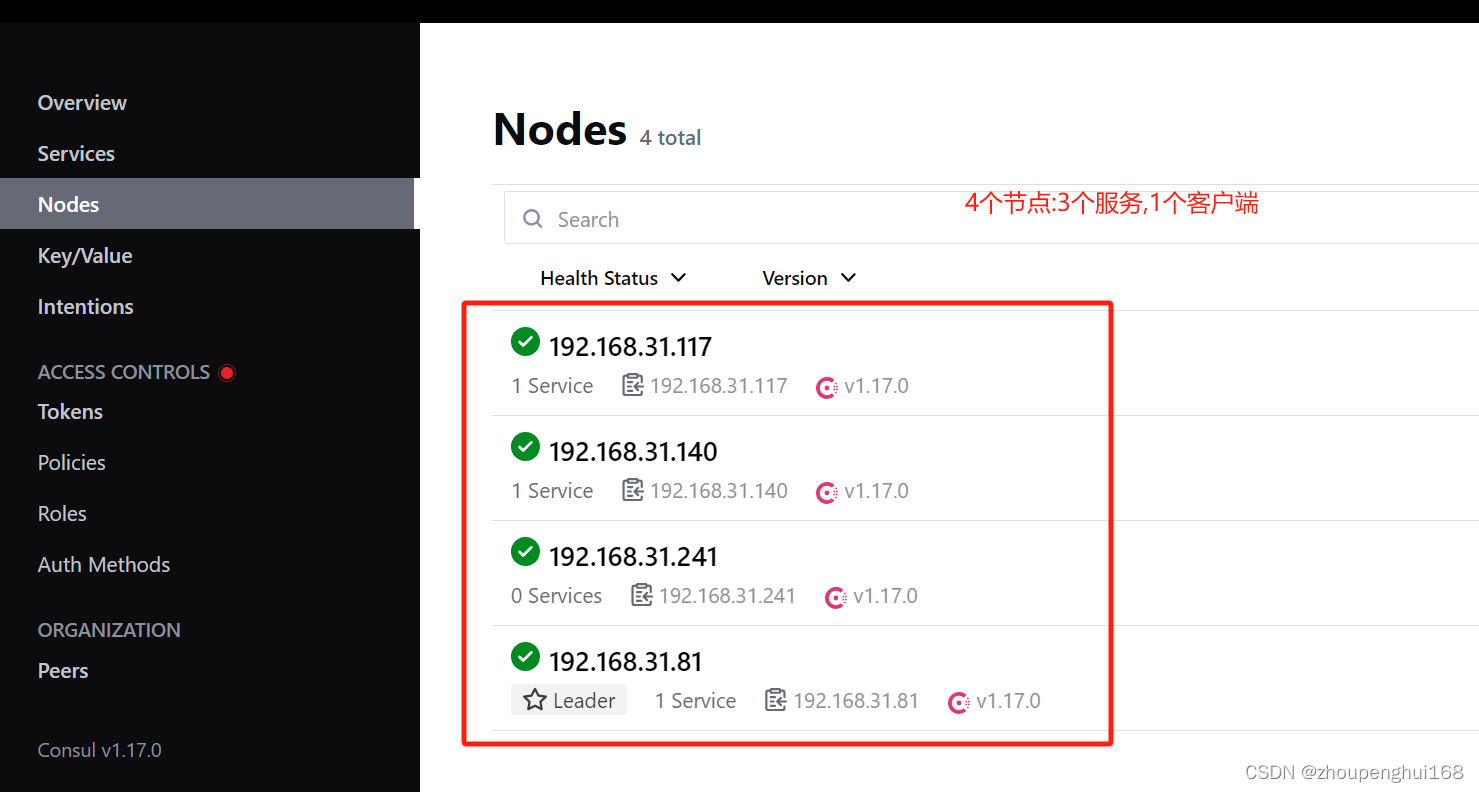

d2b0e76b4a13 hashicorp/consul "docker-entrypoint.s…" 4 seconds ago Up 1 second consul4可以在web上查看:

在http://192.168.31.140:8500/,http://192.168.31.117:8500/,http://192.168.31.81:8500/这几台机器上都可以查看

这样consul集群就通过docker部署好了,就可以通过物理机ip访问consul容器了,这几台机器就可以进行通信了

3.通过docker swarm搭建微服务集群

(1).准备mysql 以及 redis相关数据库

启动mysql

docker run --name myMysql -p 3306:3306 -v

/root/mysql/conf.d:/etc/mysql/conf.d -v /root/mysql/data:/var/lib/mysql -e

MYSQL_ROOT_PASSWORD=123456 -d mysql启动redis

docker run

-p 6379:6379

--name redis

-v /docker/redis/redis.conf:/etc/redis/redis.conf

-v /docker/redis/data:/data

--restart=always

-d redis redis-server /etc/redis/redis.conf对上面命令详解:

docker run

-p 6379:6379 docker与宿主机的端口映射

--name redis redis容器的名字

-v /docker/redis/redis.conf:/etc/redis/redis.conf 挂载redis.conf文件

-v /docker/redis/data:/data 挂在redis的持久化数据

--restart=always 设置redis容器随docker启动而自启动

-d redis redis-server /etc/redis/redis.conf 指定redis在docker中的配置文件路径,后

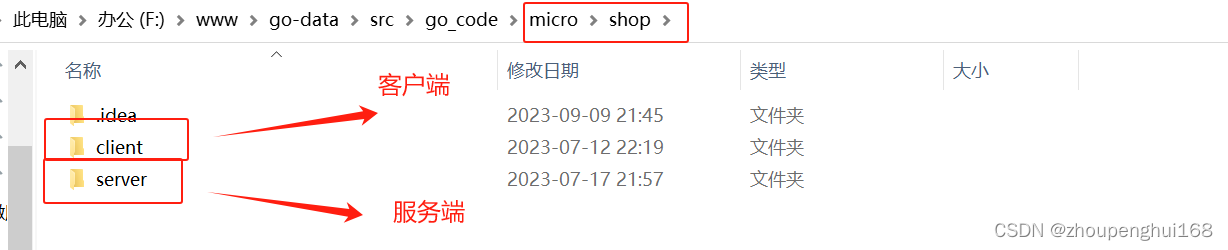

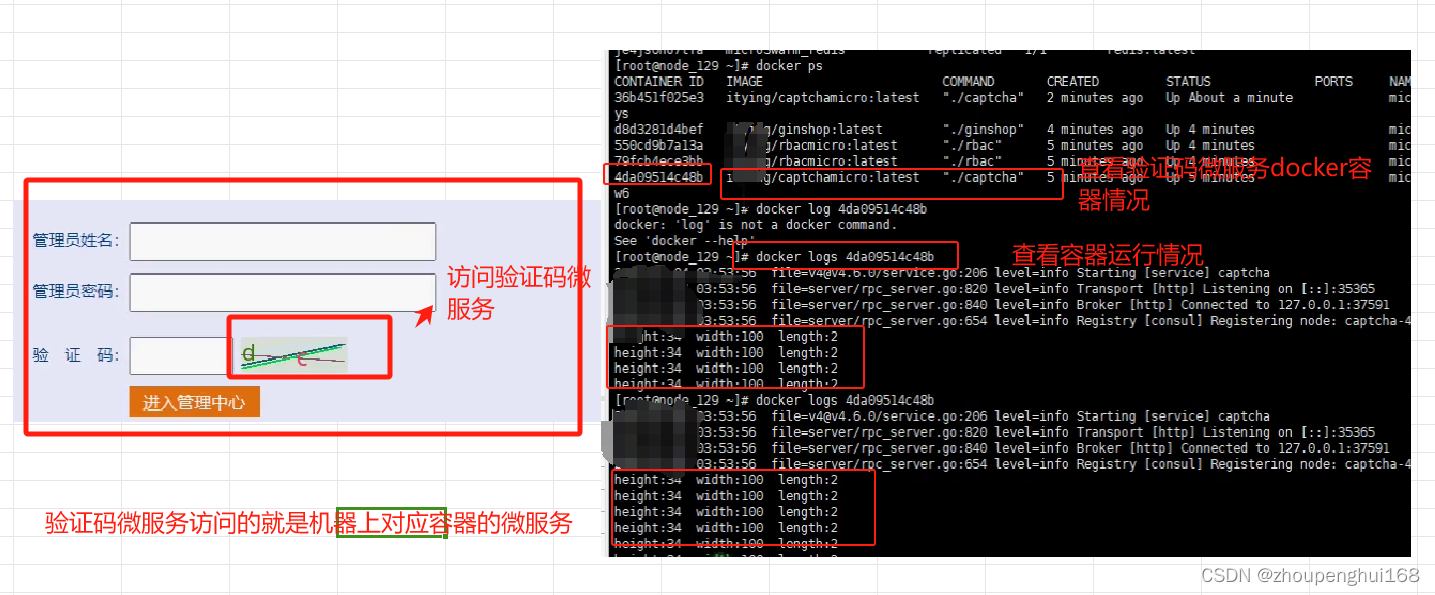

台启动redis(2).准备程序

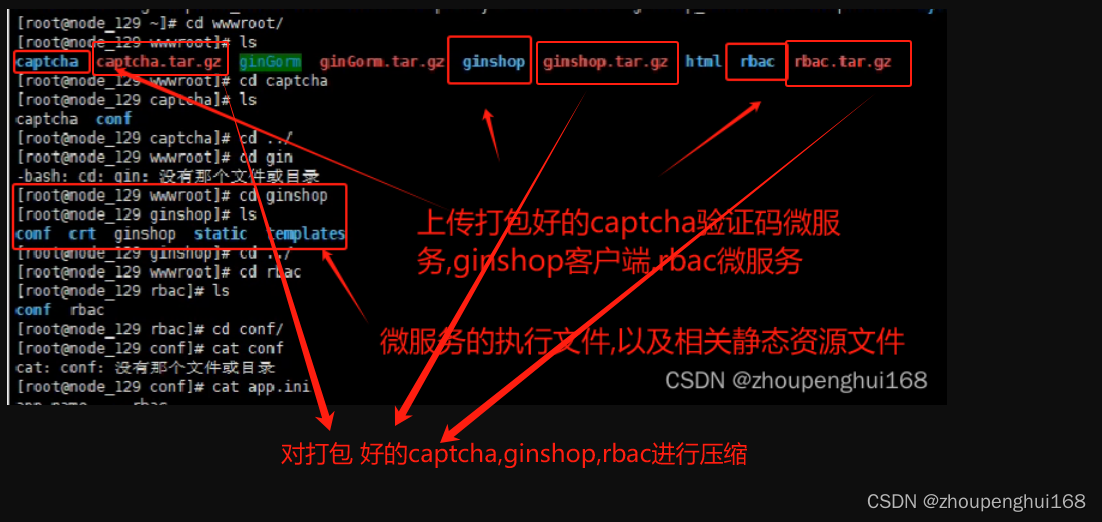

在所有需要部署微服务的服务器上进行下面的操作,这里以192.168.31.129,192.168.31.132,192.168.31.130这三台服务器来部署

1).打包项目

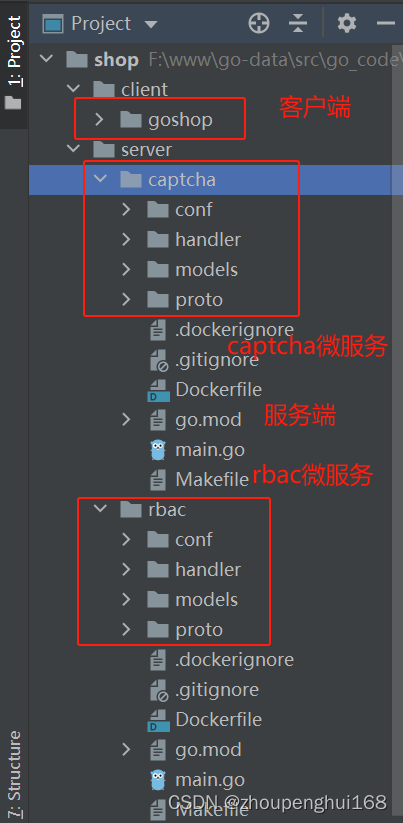

这里以前面Gin项目rbac微服务,captcha微服务为案例讲解,需要打包rbac微服务代码,captcha微服务代码,微服务客户端代码,具体打包方式见前面章节[Docker]六.Docker自动部署nodejs以及golang项目

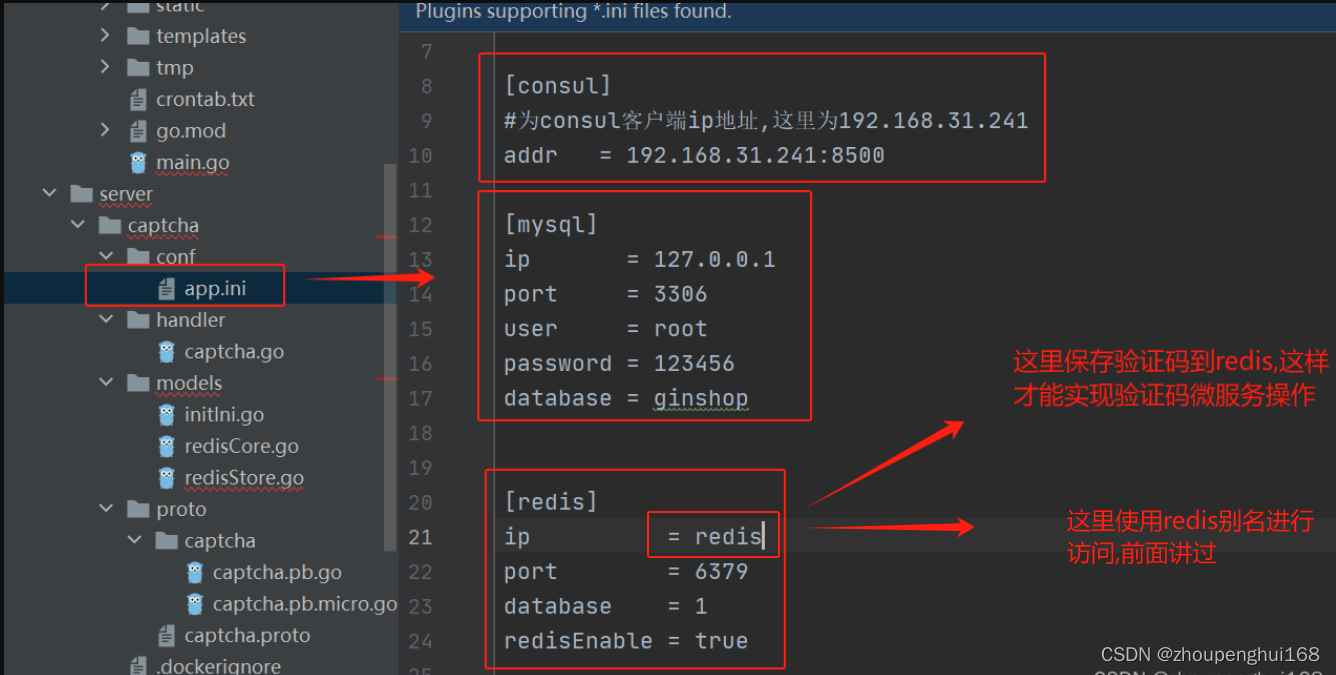

注意:

需传入打包好的文件以及需要的配置文件,比如:app.ini,statics,view等静态资源文件

这里以192.168.31.129,192.168.31.132,192.168.31.130这三台机器来部署微服务,把打包好的项目上传到这三台服务器上

在打包的时候,需要注意: 微服务中的app.ini 需要修改consul配置地址为上面consul客户端地址,mysql配置需要修改为自己搭建的mysql地址

2).对打包好的文件进行压缩

3).配置Dockerfile

3).配置Dockerfile

微服务captcha

FROM centos:centos7

ADD /wwwroot/captcha.tar.gz /root

WORKDIR /root

RUN chmod -R 777 captcha

WORKDIR /root/captcha

ENTRYPOINT ["./captcha"]微服务rbac

rbac_Dockerfile

FROM centos:centos7

ADD /wwwroot/rbac.tar.gz /root

WORKDIR /root

RUN chmod -R 777 rbac

WORKDIR /root/rbac

ENTRYPOINT ["./rbac"]ginshop

ginshop_Dockerfile

FROM centos:centos7

ADD /wwwroot/ginshop.tar.gz /root

WORKDIR /root

RUN chmod -R 777 ginshop

WORKDIR /root/ginshop

ENTRYPOINT ["./ginshop"]![]()

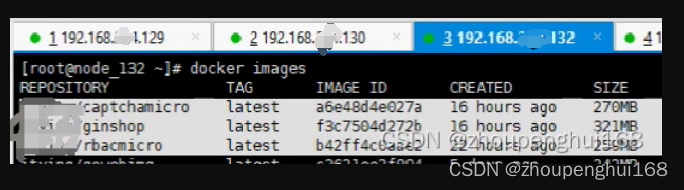

4).在要部署的服务器上面build对应的镜像

docker build -f captcha_Dockerfile -t docker.io/captchamicro:latest .

docker build -f rbac_Dockerfile -t docker.io/rbacmicro:latest .

docker build -f ginshop_Dockerfile -t docker.io/ginshop:latest .

(3).配置docker-compose.yml

version: "3"

services:

redis: #配置redis,这里可以单独配置redis,把redis放到专门的一台服务器上

image: redis

restart: always

deploy:

replicas: 1 #副本数量

captcha_micro: #验证码微服务

image: captchamicro #镜像名称:通过项目打包并build成的验证码微服务镜像

restart: always

deploy:

replicas: 6 #副本数量

resources: #资源

limits: #配置cpu

cpus: "0.3" # 设置该容器最多只能使用 30% 的 CPU

memory: 500M # 设置该容器最多只能使用 500M内存

restart_policy: #定义容器重启策略, 用于代替 restart 参数

condition: on-failure #只有当容器内部应用程序出现问题才会重启

rbac_micro: #rbac微服务

image: rbacmicro

restart: always

deploy:

replicas: 6 #副本数量

resources: #资源

limits: #配置cpu

cpus: "0.3" # 设置该容器最多只能使用 30% 的 CPU

memory: 500M # 设置该容器最多只能使用 500M内存

restart_policy: #定义容器重启策略, 用于代替 restart 参数

condition: on-failure #只有当容器内部应用程序出现问题才会重启

depends_on:

- captcha_micro

ginshop: #客户端微服务

image: ginshop

restart: always

ports:

- 8080:8080

deploy:

replicas: 6 #副本数量

resources: #资源

limits: #配置cpu

cpus: "0.3" # 设置该容器最多只能使用 30% 的 CPU

memory: 500M # 设置该容器最多只能使用 500M内存

restart_policy: #定义容器重启策略, 用于代替 restart 参数

condition: on-failure #只有当容器内部应用程序出现问题才会重启

depends_on:

- rbac_micro(4).创建集群、部署微服务集群

这里可以参考:[Docker]十.Docker Swarm讲解

#在192.168.31.129上面部署集群,命令如下:

docker swarm init --advertise-addr 192.168.31.129[root@manager_129 ~]# docker swarm init --advertise-addr 192.168.31.129

Swarm initialized: current node (qu1ydd2t6occ8fo76rvaksidd) is now a manager.

To add a worker to this swarm, run the following command:

docker swarm join --token SWMTKN-1-6afkz1ub7m8q37cehxmjiirs6a0r25qt1hzf0no1c0xcny55qc-d3gtv0qcsuhivozbomp4d73ha 192.168.31.129:2377

To add a manager to this swarm, run 'docker swarm join-token manager' and follow the instructions.

[root@manager_1291 ~]docker swarm join --token SWMTKN-1-6afkz1ub7m8q37cehxmjiirs6a0r25qt1hzf0no1c0xcny55qc-d3gtv0qcsuhivozbomp4d73ha 192.168.31.129:2377把上面命令依次在192.168.31.132,192.168.31.130上运行,这样这几个工作节点就加入了集群了,在管理节点上运行命令 docker node ls 就可以查看所有集群节点,当然,还可以多创建几个管理节点

(5).部署项目

在docker-composer.yml目录下运行以下命名,部署微服务,可以参考[Docker]十.Docker Swarm讲解

(6).访问项目

可以通过192.168.31.129:8080访问项目前端,通过192.168.31.129:8080/admin访问项目后台,

(7).配nginx负载均衡

好了,Docker consul集群搭建、微服务部署,Consul集群+Swarm集群部署微服务项目就完成了,制作不已,请多多点赞

[上一节][Docker]十一.Docker Swarm集群raft算法,Docker Swarm Web管理工具

关联章节:[golang gin框架] 45.Gin商城项目-微服务实战之后台Rbac微服务之角色权限关联

原文地址:https://blog.csdn.net/zhoupenghui168/article/details/134611262

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。

如若转载,请注明出处:http://www.7code.cn/show_10633.html

如若内容造成侵权/违法违规/事实不符,请联系代码007邮箱:suwngjj01@126.com进行投诉反馈,一经查实,立即删除!