前言:

最近在部署prometheus的过程中遇到的这个问题,感觉比较的经典,有必要记录一下。

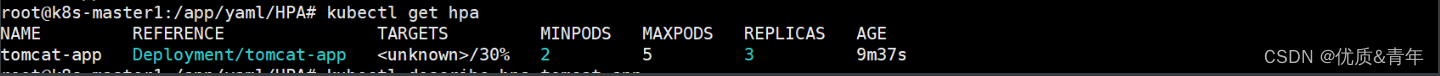

现象是部署prometheus主服务的时候,看不到pod,只能看到deployment,由于慌乱,一度以为是集群有毛病了,然后重新做了集群,具体情况如下图:

注:up–to-date表示没有部署,available表示无可用pod

[root@node4 yaml]# k get deployments.apps -n monitor-sa

NAME READY UP-TO-DATE AVAILABLE AGE

prometheus-server 0/2 0 0 2m5s

[root@node4 yaml]# k get po -n monitor-sa

NAME READY STATUS RESTARTS AGE

node-exporter-6ttbl 1/1 Running 0 23h

node-exporter-7ls5t 1/1 Running 0 23h

node-exporter-r287q 1/1 Running 0 23h

node-exporter-z85dm 1/1 Running 0 23h

部署文件如下;

注意注意,有一个sa的引用哦 serviceAccountName: monitor

[root@node4 yaml]# cat prometheus-deploy.yaml

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: prometheus-server

namespace: monitor-sa

labels:

app: prometheus

spec:

replicas: 2

selector:

matchLabels:

app: prometheus

component: server

#matchExpressions:

#- {key: app, operator: In, values: [prometheus]}

#- {key: component, operator: In, values: [server]}

template:

metadata:

labels:

app: prometheus

component: server

annotations:

prometheus.io/scrape: 'false'

spec:

nodeName: node4

serviceAccountName: monitor

containers:

- name: prometheus

image: prom/prometheus:v2.2.1

imagePullPolicy: IfNotPresent

command:

- prometheus

- --config.file=/etc/prometheus/prometheus.yml

- --storage.tsdb.path=/prometheus

- --storage.tsdb.retention=720h

ports:

- containerPort: 9090

protocol: TCP

volumeMounts:

- mountPath: /etc/prometheus/prometheus.yml

name: prometheus-config

subPath: prometheus.yml

- mountPath: /prometheus/

name: prometheus-storage-volume

volumes:

- name: prometheus-config

configMap:

name: prometheus-config

items:

- key: prometheus.yml

path: prometheus.yml

mode: 0644

- name: prometheus-storage-volume

hostPath:

path: /data

type: Directory

解决方案:

那么,遇到这种情况,我们应该怎么做呢?当然了,第一点就是不要慌,其次deployment控制器有一个比较不让人注意的地方,就是编辑deployment可以看到该deployment的当前状态详情,会有非常详细的信息给我们看,也就是status字段

具体的命令是 kubectl edit deployment -n 命名空间 deployment名称,在本例中是这样的:

。。。。。。略略略

path: prometheus.yml

name: prometheus-config

name: prometheus-config

- hostPath:

path: /data

type: Directory

name: prometheus-storage-volume

status:

conditions:

- lastTransitionTime: "2023-11-22T15:21:06Z"

lastUpdateTime: "2023-11-22T15:21:06Z"

message: Deployment does not have minimum availability.

reason: MinimumReplicasUnavailable

status: "False"

type: Available

- lastTransitionTime: "2023-11-22T15:21:06Z"

lastUpdateTime: "2023-11-22T15:21:06Z"

message: 'pods "prometheus-server-78bbb77dd7-" is forbidden: error looking up

service account monitor-sa/monitor: serviceaccount "monitor" not found'

reason: FailedCreate

status: "True"

type: ReplicaFailure

- lastTransitionTime: "2023-11-22T15:31:07Z"

lastUpdateTime: "2023-11-22T15:31:07Z"

message: ReplicaSet "prometheus-server-78bbb77dd7" has timed out progressing.

reason: ProgressDeadlineExceeded

status: "False"

type: Progressing

observedGeneration: 1

unavailableReplicas: 2

可以看到有三个message,第一个是标题里提到的报错信息,在dashboard里这个信息会优先显示,如果是报错的时候,第二个message是进一步解释错误问题在哪,本例里是说有个名叫 monitor的sa没有找到,第三个信息说的是这个deployment控制的rs部署失败,此信息无关紧要了,那么,重要的是第二个信息,这个信息是解决问题的关键。

附:一个正常的deployment 的status:

这个status告诉我们,他是一个副本,部署成功的,因此,第一个message是Deployment has minimum availability

serviceAccount: kube-state-metrics

serviceAccountName: kube-state-metrics

terminationGracePeriodSeconds: 30

status:

availableReplicas: 1

conditions:

- lastTransitionTime: "2023-11-21T14:56:14Z"

lastUpdateTime: "2023-11-21T14:56:14Z"

message: Deployment has minimum availability.

reason: MinimumReplicasAvailable

status: "True"

type: Available

- lastTransitionTime: "2023-11-21T14:56:13Z"

lastUpdateTime: "2023-11-21T14:56:14Z"

message: ReplicaSet "kube-state-metrics-57794dcf65" has successfully progressed.

reason: NewReplicaSetAvailable

status: "True"

type: Progressing

observedGeneration: 1

readyReplicas: 1

replicas: 1

updatedReplicas: 1

具体的解决方案:

根据以上报错信息,那么,我们就需要一个sa,当然了,如果不想给太高的权限,就需要自己编写权限文件了,这里我偷懒 使用cluster–admin,具体的命令如下:

[root@node4 yaml]# k create sa -n monitor-sa monitor

serviceaccount/monitor created

[root@node4 yaml]# k create clusterrolebinding monitor-clusterrolebinding -n monitor-sa --clusterrole=cluster-admin --serviceaccount=monitor-sa:monitor再次部署就成功了:

[root@node4 yaml]# k get po -n monitor-sa -owide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

node-exporter-6ttbl 1/1 Running 0 24h 192.168.123.12 node2 <none> <none>

node-exporter-7ls5t 1/1 Running 0 24h 192.168.123.11 node1 <none> <none>

node-exporter-r287q 1/1 Running 1 (2m57s ago) 24h 192.168.123.14 node4 <none> <none>

node-exporter-z85dm 1/1 Running 0 24h 192.168.123.13 node3 <none> <none>

prometheus-server-78bbb77dd7-6smlt 1/1 Running 0 20s 10.244.41.19 node4 <none> <none>

prometheus-server-78bbb77dd7-fhf5k 1/1 Running 0 20s 10.244.41.18 node4 <none> <none>

总结来了:

那么,其实缺少sa可能会导致pod被隐藏,可以得出,sa是这个deployment的必要非显性依赖,同样的,如果部署文件内有写configmap,但configmap并没有提前创建也会出现这种错误,就是创建了deployment,但pod创建不出来,不像namespace没有提前创建的情况,namespace是必要显性依赖,没有会直接不让创建。

例如,下面创建一个针对default这个命名空间的配额文件,此文件定义如下:

定义的内容为规定default命名空间下最多4个pods,最多20个services,只能使用10G的内存,5.5的CPU

[root@node4 yaml]# cat quota-nginx.yaml

apiVersion: v1

kind: ResourceQuota

metadata:

name: quota

namespace: default

spec:

hard:

requests.cpu: "5.5"

limits.cpu: "5.5"

requests.memory: 10Gi

limits.memory: 10Gi

pods: "4"

services: "20"

[root@node4 yaml]# cat nginx.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

annotations:

deployment.kubernetes.io/revision: "1"

creationTimestamp: "2023-11-22T16:13:33Z"

generation: 1

labels:

app: nginx

name: nginx

namespace: default

resourceVersion: "16411"

uid: e9a5cdc5-c6f0-45fb-a001-fcdd695eb925

spec:

progressDeadlineSeconds: 600

replicas: 6

revisionHistoryLimit: 10

selector:

matchLabels:

app: nginx

strategy:

rollingUpdate:

maxSurge: 25%

maxUnavailable: 25%

type: RollingUpdate

template:

metadata:

creationTimestamp: null

labels:

app: nginx

spec:

containers:

- image: nginx:1.18

imagePullPolicy: IfNotPresent

name: nginx

resources: {}

terminationMessagePath: /dev/termination-log

terminationMessagePolicy: File

resources:

limits:

cpu: 1

memory: 1Gi

requests:

cpu: 500m

memory: 512Mi

dnsPolicy: ClusterFirst

restartPolicy: Always

schedulerName: default-scheduler

securityContext: {}

terminationGracePeriodSeconds: 30

创建完毕后,发现只有四个pod,配额有效:

[root@node4 yaml]# k get po

NAME READY STATUS RESTARTS AGE

nginx-54f9858f64-g65pk 1/1 Running 0 4m50s

nginx-54f9858f64-h42vf 1/1 Running 0 4m50s

nginx-54f9858f64-s776t 1/1 Running 0 4m50s

nginx-54f9858f64-wl7wz 1/1 Running 0 4m50s

那么,还有两个pod呢?

[root@node4 yaml]# k get deployments.apps nginx -oyaml |grep message

message: Deployment does not have minimum availability.

message: 'pods "nginx-54f9858f64-p8rxf" is forbidden: exceeded quota: quota, requested:

message: ReplicaSet "nginx-54f9858f64" is progressing.

那么解决的方法也很简单,也就是调整quota啦,怎么调整就不在这里废话了吧!!!!!!!!!~~~~~~

原文地址:https://blog.csdn.net/alwaysbefine/article/details/134565462

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。

如若转载,请注明出处:http://www.7code.cn/show_14229.html

如若内容造成侵权/违法违规/事实不符,请联系代码007邮箱:suwngjj01@126.com进行投诉反馈,一经查实,立即删除!