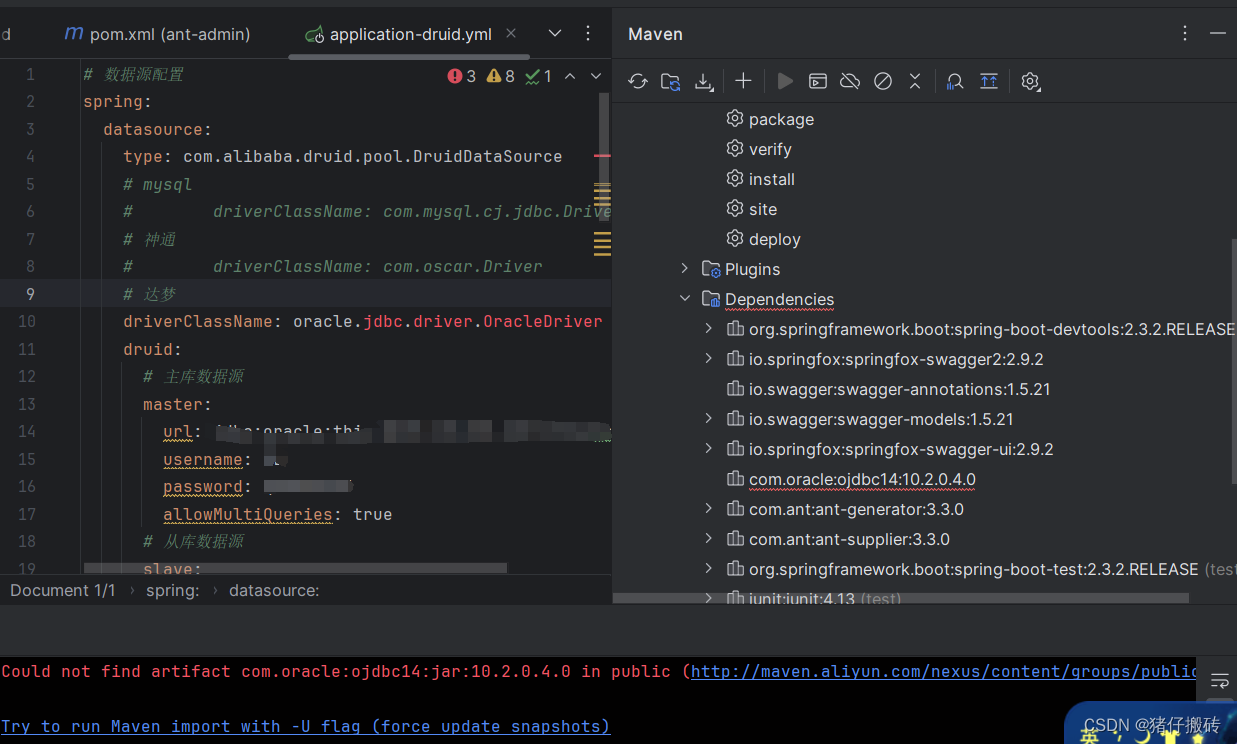

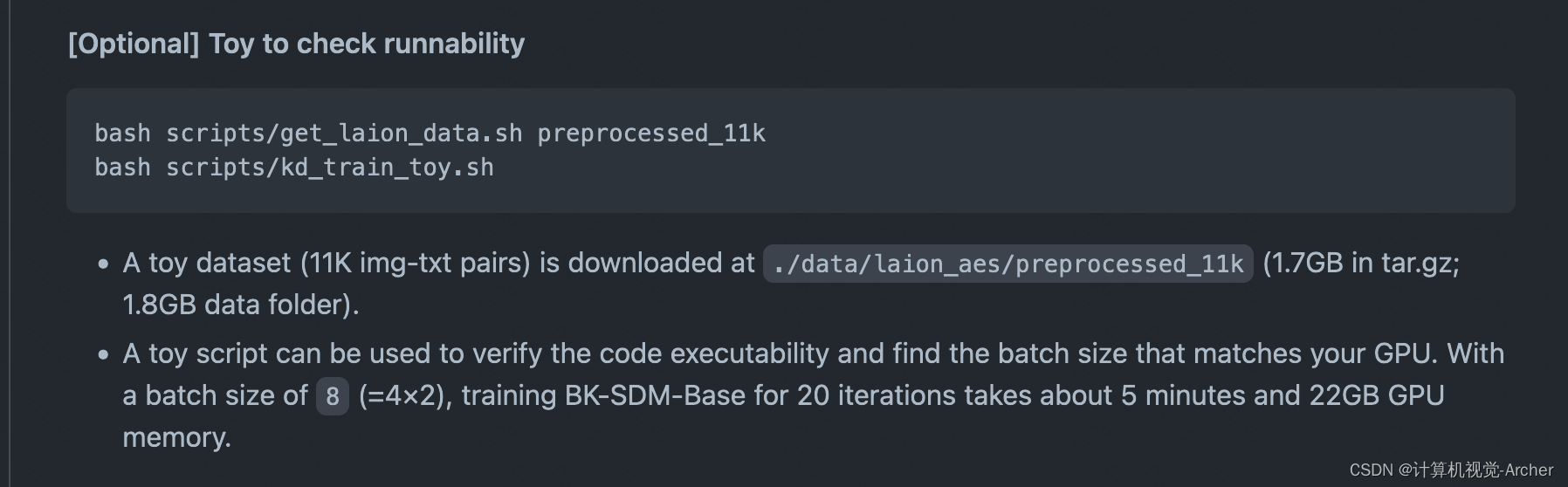

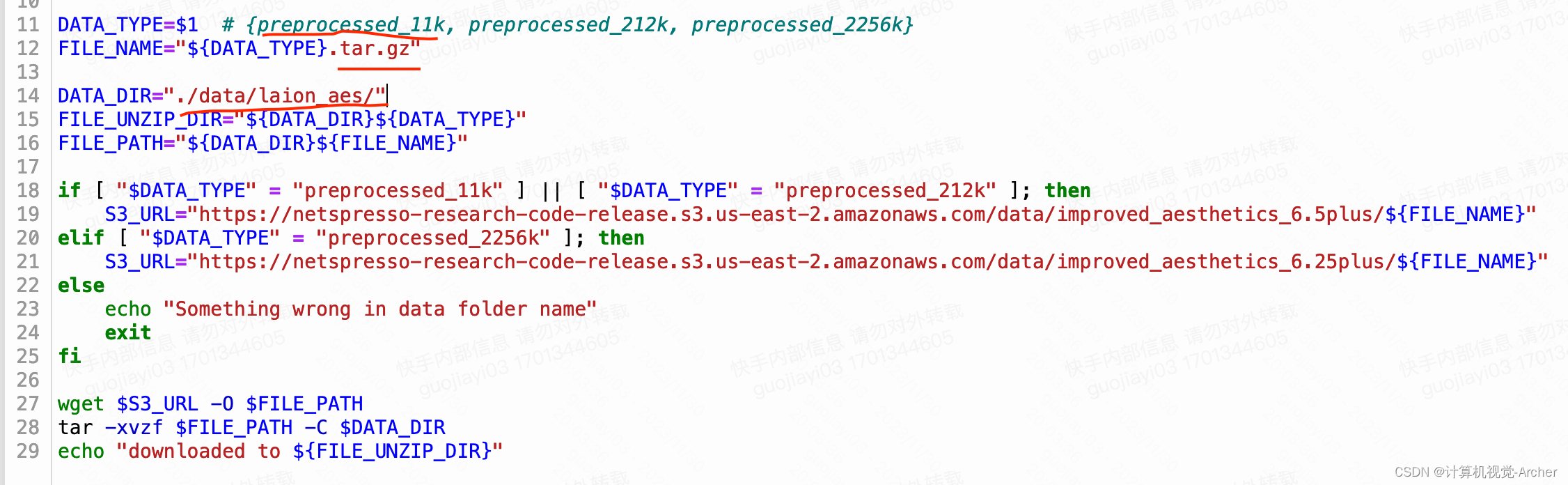

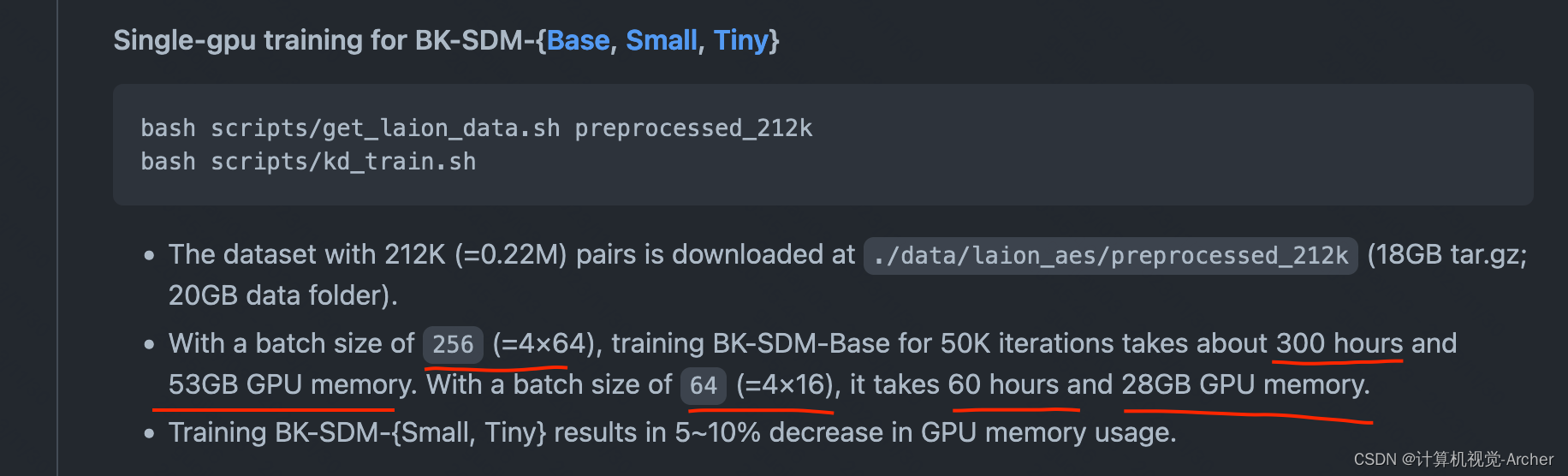

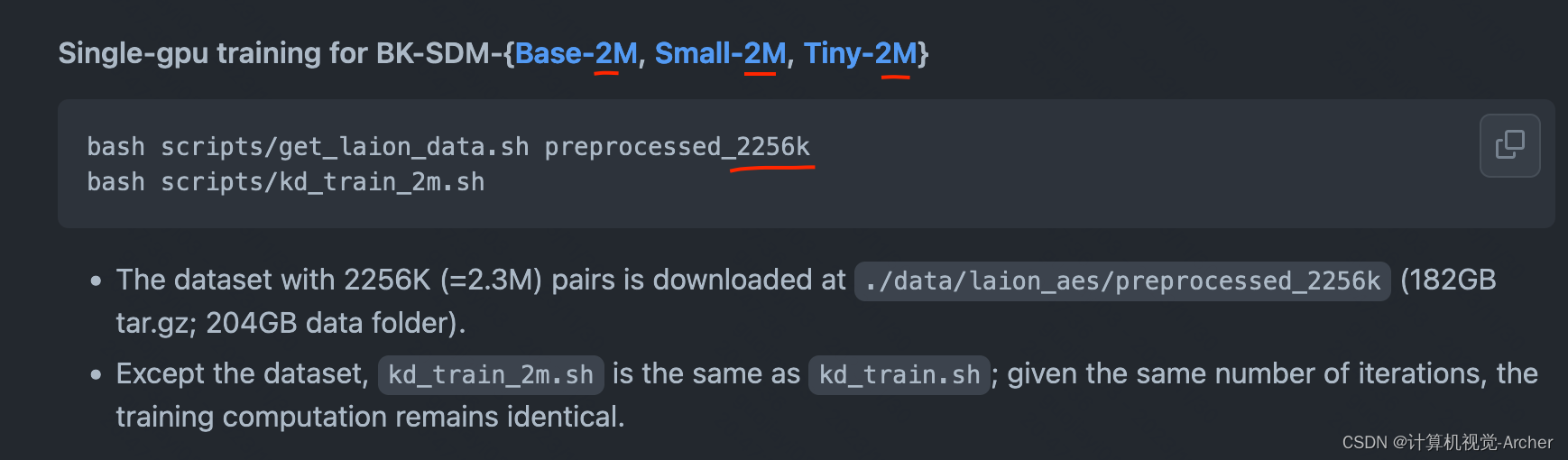

本文介绍: 我修改后下载文件名 https://… …/preprocessed_11k.tar.gz直接粘贴到网址里面也可以下载。检测是否能够训练(先下载数据集get_laion_data.sh再运行代码kd_train_toy.sh)批量大小为8 (=4×2),训练BK-SDM-Base 20次迭代大约需要5分钟和22GB的GPU内存。$FILe_PATH 就是下载路径./data/laion_aes/preprocessed_11k。单GPU训练BK-SDM{Base, Small, Tiny}-2。

Note on the torch versions we‘ve used

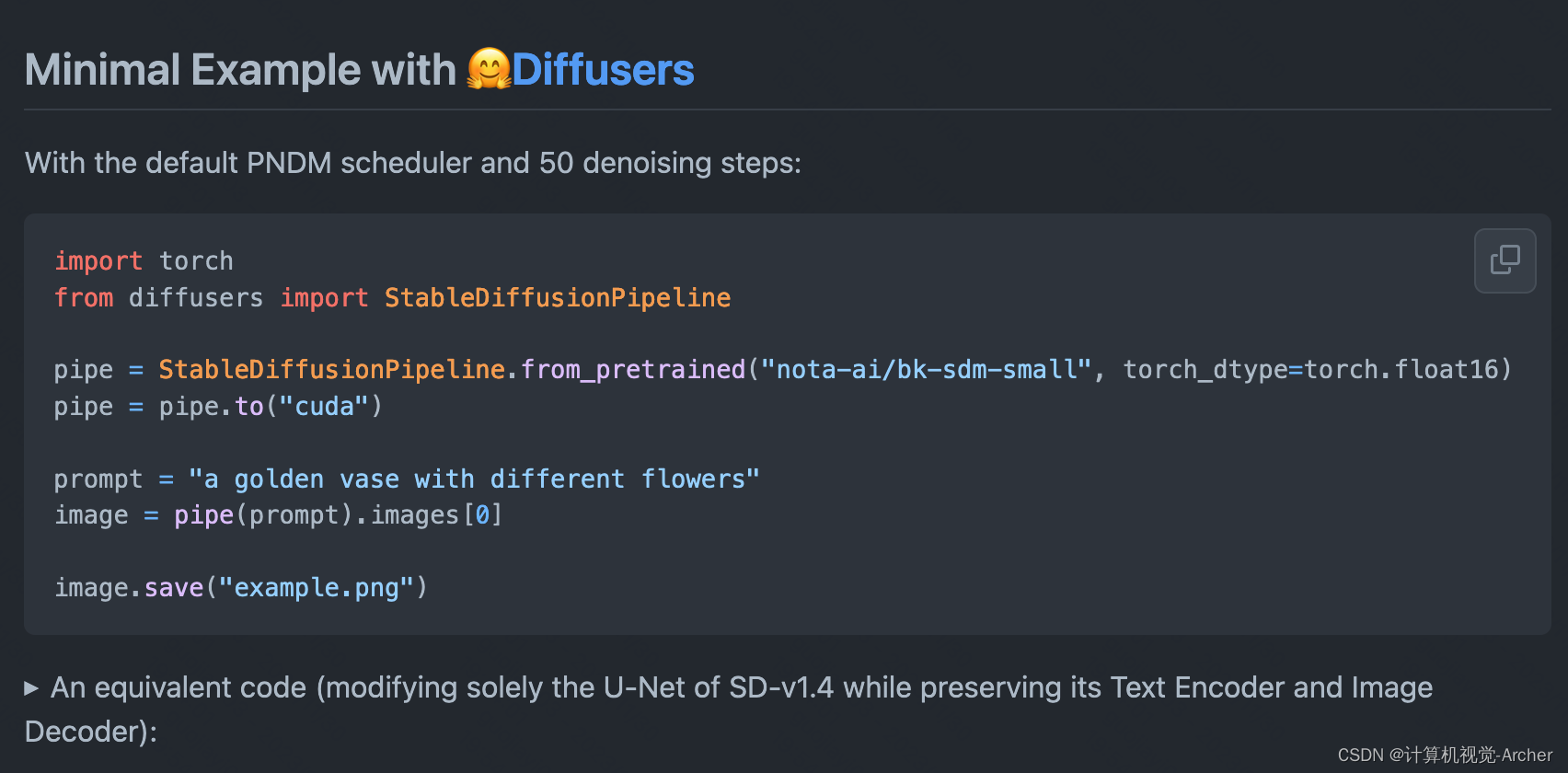

等效代码(仅修改SD-v1.4的U-Net,同时保留其文本编码器和图像解码器):

Our code was based on train_text_to_image.py of Diffusers 0.15.0.dev0. To access the latest version, use this link.

BK-SDM的diffusers版本0.15

我的diffusers版本比较高0.24.0

声明:本站所有文章,如无特殊说明或标注,均为本站原创发布。任何个人或组织,在未征得本站同意时,禁止复制、盗用、采集、发布本站内容到任何网站、书籍等各类媒体平台。如若本站内容侵犯了原著者的合法权益,可联系我们进行处理。

![[技术杂谈]如何下载vscode历史版本](https://img-blog.csdnimg.cn/direct/18e927e78e82496e80649940eb70a716.png)