1、Spark On Hive的配置

1)、在Spark客户端配置Hive On Spark

在Spark客户端安装包下spark-2.3.1/conf中创建文件hive–site.xml:

<configuration>

<property>

<name>hive.metastore.uris</name>

<value>thrift://mynode1:9083</value>

</property>

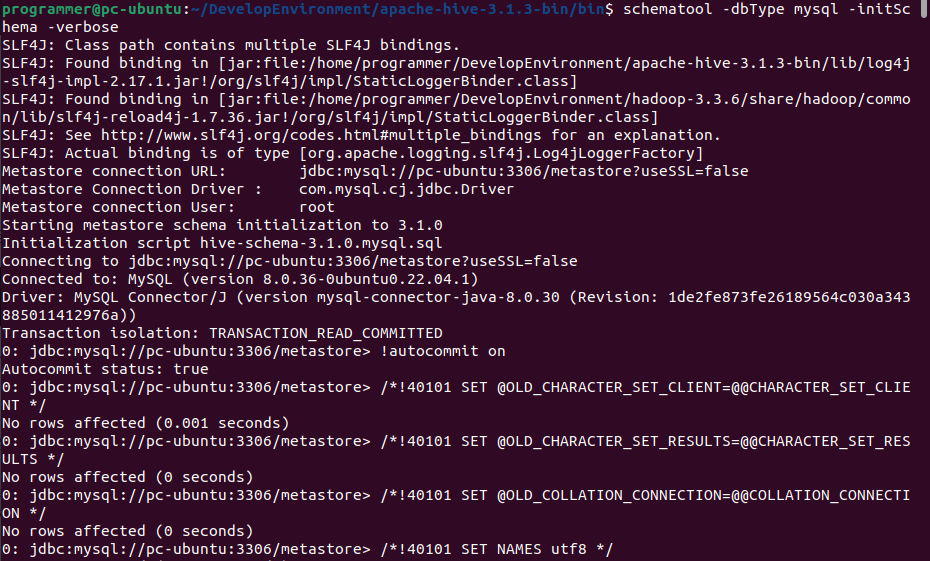

</configuration>2)、启动Hive的metastore服务

hive --service metastore3)、启动zookeeper集群,启动HDFS集群

4)、启动SparkShell读取Hive中的表总数,对比hive中查询同一表查询总数测试时间

./spark-shell

--master spark://node1:7077,node2:7077

--executor-cores 1

--executor-memory 1g

--total-executor-cores 1

import org.apache.spark.sql.hive.HiveContext

val hc = new HiveContext(sc)

hc.sql("show databases").show

hc.sql("user default").show

hc.sql("select count(*) from jizhan").show- 注意:

![]()

找不到HDFS集群路径,要在客户端机器conf/spark–env.sh中设置HDFS的路径:

![]()

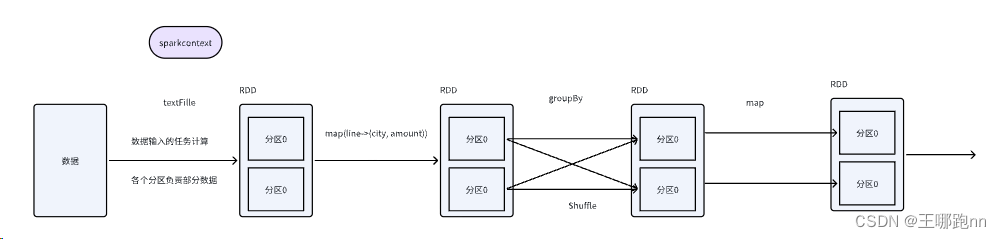

2、读取Hive中的数据加载成DataFrame

在Spark2.0+版本中之后,建议使用SparkSession对象,读取Hive中的数据需要开启Hive支持。

./spark-submit

--master spark://node1:7077,node2:7077

--executor-cores 1

--executor-memory 2G

--total-executor-cores 1

--class com.lw.sparksql.dataframe.CreateDFFromHive

/root/test/HiveTest.jarjava:

SparkConf conf = new SparkConf();

conf.setAppName("hive");

JavaSparkContext sc = new JavaSparkContext(conf);

//HiveContext是SQLContext的子类。

HiveContext hiveContext = new HiveContext(sc);

hiveContext.sql("USE spark");

hiveContext.sql("DROP TABLE IF EXISTS student_infos");

//在hive中创建student_infos表

hiveContext.sql("CREATE TABLE IF NOT EXISTS student_infos (name STRING,age INT) row format delimited fields terminated by 't' ");

hiveContext.sql("load data local inpath '/root/test/student_infos' into table student_infos");

hiveContext.sql("DROP TABLE IF EXISTS student_scores");

hiveContext.sql("CREATE TABLE IF NOT EXISTS student_scores (name STRING, score INT) row format delimited fields terminated by 't'");

hiveContext.sql("LOAD DATA "

+ "LOCAL INPATH '/root/test/student_scores'"

+ "INTO TABLE student_scores");

/**

* 查询表生成DataFrame

*/

DataFrame goodStudentsDF = hiveContext.sql("SELECT si.name, si.age, ss.score "

+ "FROM student_infos si "

+ "JOIN student_scores ss "

+ "ON si.name=ss.name "

+ "WHERE ss.score>=80");

hiveContext.sql("DROP TABLE IF EXISTS good_student_infos");

goodStudentsDF.registerTempTable("goodstudent");

DataFrame result = hiveContext.sql("select * from goodstudent");

result.show();

/**

* 将结果保存到hive表 good_student_infos

*/

goodStudentsDF.write().mode(SaveMode.Overwrite).saveAsTable("good_student_infos");

Row[] goodStudentRows = hiveContext.table("good_student_infos").collect();

for(Row goodStudentRow : goodStudentRows) {

System.out.println(goodStudentRow);

}

sc.stop();1.val spark = SparkSession.builder().appName("CreateDataFrameFromHive").enableHiveSupport().getOrCreate()

2.spark.sql("use spark")

3.spark.sql("drop table if exists student_infos")

4.spark.sql("create table if not exists student_infos (name string,age int) row format delimited fields terminated by 't'")

5.spark.sql("load data local inpath '/root/test/student_infos' into table student_infos")

6.

7.spark.sql("drop table if exists student_scores")

8.spark.sql("create table if not exists student_scores (name string,score int) row format delimited fields terminated by 't'")

9.spark.sql("load data local inpath '/root/test/student_scores' into table student_scores")

10.// val frame: DataFrame = spark.table("student_infos")

11.// frame.show(100)

12.

13.val df = spark.sql("select si.name,si.age,ss.score from student_infos si,student_scores ss where si.name = ss.name")

14.df.show(100)

15.spark.sql("drop table if exists good_student_infos")

16./**

17.* 将结果写入到hive表中

18.*/

19.df.write.mode(SaveMode.Overwrite).saveAsTable("good_student_infos")原文地址:https://blog.csdn.net/yaya_jn/article/details/134684055

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。

如若转载,请注明出处:http://www.7code.cn/show_44044.html

如若内容造成侵权/违法违规/事实不符,请联系代码007邮箱:suwngjj01@126.com进行投诉反馈,一经查实,立即删除!

声明:本站所有文章,如无特殊说明或标注,均为本站原创发布。任何个人或组织,在未征得本站同意时,禁止复制、盗用、采集、发布本站内容到任何网站、书籍等各类媒体平台。如若本站内容侵犯了原著者的合法权益,可联系我们进行处理。