目录

本文主要介绍了pod资源与pod相关的亲和性,以及pod的重启策略

pod亲和性与反亲和性

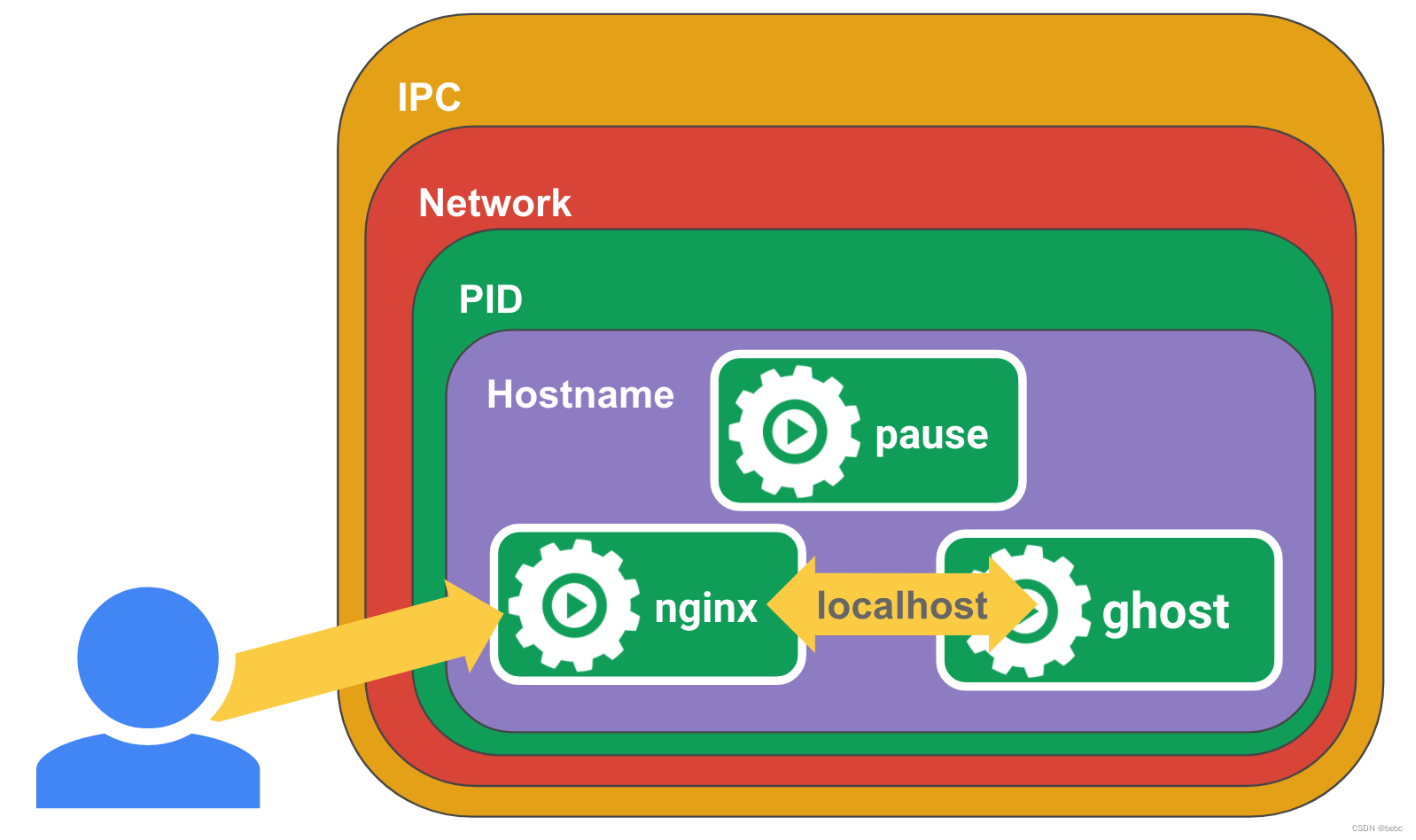

pod亲和性(podAffinity)有两种 1.podaffinity,即联系比较紧密的pod更倾向于使用同一个区域 比如tomcat和nginx这样资源的利用效率更高

2.podunaffinity,即两套完全相同,或两套完全不同功能的服务 为了不互相影响容灾效果,或者让服务之间不会互相影响,更倾向于不适用同一个区域

那么如何判断是不是“同一个区域”就非常重要

#查看帮助

kubectl explain pods.spec.affinity.podAffinity

preferredDuringSchedulingIgnoredDuringExecution #软亲和性,尽可能在一起

requiredDuringSchedulingIgnoredDuringExecution #硬亲和性,一定要在一起

pod亲和性

#硬亲和性

kubectl explain pods.spec.affinity.podAffinity.requiredDuringSchedulingIgnoredDuringExecution

labelSelector <Object> #以标签为筛选条件,选择一组亲和的pod

namespaceSelector <Object> #以命名空间为筛选条件,选择一组亲和的pod

namespaces <[]string> #确定命名空间的位置

topologyKey <string> -required- #拓扑逻辑键,根据xx判断是否是同一位置

cat > qinhe-pod1.yaml << EOF

apiVersion: v1

kind: Pod

metadata:

name: qinhe1

namespace: default

labels:

user: ws

spec:

containers:

- name: qinhe1

image: docker.io/library/nginx

imagePullPolicy: IfNotPresent

EOF

kubectl apply -f qinhe-pod1.yaml #定义一个初始的pod,后面的pod可以依次为参照

echo "

apiVersion: v1

kind: Pod

metadata:

name: qinhe2

labels:

app: app1

spec:

containers:

- name: qinhe2

image: docker.io/library/nginx

imagePullPolicy: IfNotPresent

affinity:

podAffinity: # 和pod亲和性

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector: # 以标签为筛选条件

matchExpressions: # 以表达式进行匹配

- {key: user, operator: In, values: ["ws"]}

topologyKey: kubernetes.io/hostname

#带有kubernetes.io/hostname标签相同的被认为是同一个区域,即以主机名区分

#标签的node被认为是统一位置

" > qinhe-pod2.yaml

kubectl apply -f qinhe-pod2.yaml

kubectl get pods -owide #因为hostname node1和node2不同,所以只会调度到node1

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

qinhe1 1/1 Running 0 68s 10.10.179.9 ws-k8s-node1 <none> <none>

qinhe2 1/1 Running 0 21s 10.10.179.10 ws-k8s-node1 <none> <none>

#修改

...

topologyKey: beta.kubernetes.io/arch

... #node1和node2这两个标签都相同

kubectl delete -f qinhe-pod2.yaml

kubectl apply -f qinhe-pod2.yaml

kubectl get pods -owide #再查看时会发现qinhe2分到了node2

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

qinhe1 1/1 Running 0 4m55s 10.10.179.9 ws-k8s-node1 <none> <none>

qinhe2 1/1 Running 0 15s 10.10.234.68 ws-k8s-node2 <none> <none>

#清理环境

kubectl delete -f qinhe-pod1.yaml

kubectl delete -f qinhe-pod2.yaml

pod反亲和性

kubectl explain pods.spec.affinity.podAntiAffinity

preferredDuringSchedulingIgnoredDuringExecution <[]Object>

requiredDuringSchedulingIgnoredDuringExecution <[]Object>

#硬亲和性

#创建qinhe-pod3.yaml

cat > qinhe-pod3.yaml << EOF

apiVersion: v1

kind: Pod

metadata:

name: qinhe3

namespace: default

labels:

user: ws

spec:

containers:

- name: qinhe3

image: docker.io/library/nginx

imagePullPolicy: IfNotPresent

EOF

#创建qinhe-pod4.yaml

echo "

apiVersion: v1

kind: Pod

metadata:

name: qinhe4

labels:

app: app1

spec:

containers:

- name: qinhe4

image: docker.io/library/nginx

imagePullPolicy: IfNotPresent

affinity:

podAntiAffinity: # 和pod亲和性

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector: # 以标签为筛选条件

matchExpressions: # 以表达式进行匹配

- {key: user, operator: In, values: ["ws"]} #表达式user=ws

topologyKey: kubernetes.io/hostname #以hostname作为区分是否同个区域

" > qinhe-pod4.yaml

kubectl apply -f qinhe-pod3.yaml

kubectl apply -f qinhe-pod4.yaml

#分配到了不同的node

kubectl get pods -owide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

qinhe3 1/1 Running 0 9s 10.10.179.11 ws-k8s-node1 <none> <none>

qinhe4 1/1 Running 0 8s 10.10.234.70 ws-k8s-node2 <none> <none>

#修改topologyKey

pod4修改为topologyKey: user

kubectl label nodes ws-k8s-node1 user=xhy

kubectl label nodes ws-k8s-node2 user=xhy

#现在node1和node2都会被pod4识别为同一位置,因为node的label中user值相同

kubectl delete -f qinhe-pod4.yaml

kubectl apply -f qinhe-pod4.yaml

#直接显示离线

kubectl get pods -owide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

qinhe3 1/1 Running 0 9m59s 10.10.179.12 ws-k8s-node1 <none> <none>

qinhe4 0/1 Pending 0 2s <none> <none> <none> <none>

#查看日志

Warning FailedScheduling 74s default-scheduler 0/4 nodes are available: 2 node(s) didn't match pod anti-affinity rules, 2 node(s) had untolerated taint {node-role.kubernetes.io/control-plane: }. preemption: 0/4 nodes are available: 2 No preemption victims found for incoming pod, 2 Preemption is not helpful for scheduling..

#pod反亲和性的软亲和性与node亲和性的软亲和性同理

#清理环境

kubectl label nodes ws-k8s-node1 user-

kubectl label nodes ws-k8s-node2 user-

kubectl delete -f qinhe-pod3.yaml

kubectl delete -f qinhe-pod4.yaml

pod状态与重启策略

pod状态

1.pending——挂起

(1)正在创建pod,检查存储、网络、下载镜像等问题

(2)条件不满足,比如硬亲和性,污点等调度条件不满足

2.failed——失败

至少有一个容器因为失败而停止,即非0状态退出

3.unknown——未知

apiserver连不上node节点的kubelet,通常是网络问题

4.Error——错误

5.succeeded——成功

pod所有容器成功终止

6.Unschedulable

pod不能被调度

7.PodScheduled

正在调度中

8.Initialized

pod初始化完成

9.ImagePullBackOff

容器拉取失败

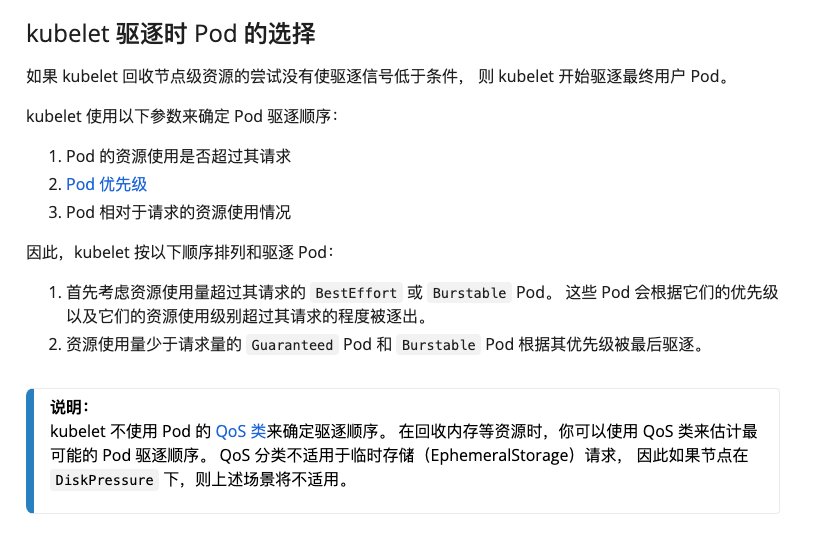

10.evicted

node节点资源不足

11.CrashLoopBackOff

容器曾经启动,但又异常退出了

pod重启策略

当容器异常时,可以通过设置RestartPolicy字段,设置pod重启策略来对pod进行重启等操作

#查看帮助

kubectl explain pod.spec.restartPolicy

KIND: Pod

VERSION: v1

FIELD: restartPolicy <string>

DESCRIPTION:

Restart policy for all containers within the pod. One of Always, OnFailure,

Never. Default to Always. More info:

<https://kubernetes.io/docs/concepts/workloads/pods/pod-lifecycle/#restart-policy>

Possible enum values:

- `"Always"` #只要异常退出,立即自动重启

- `"Never"` #不会重启容器

- `"OnFailure"`#容器错误退出,即退出码不为0时,则自动重启

#测试Always策略,创建always.yaml

cat > always.yaml << EOF

apiVersion: v1

kind: Pod

metadata:

name: always-pod

namespace: default

spec:

restartPolicy: Always

containers:

- name: test-pod

image: docker.io/library/tomcat

imagePullPolicy: IfNotPresent

EOF

kubectl apply -f always.yaml

kubectl get po #查看状态

NAME READY STATUS RESTARTS AGE

always-pod 1/1 Running 0 22s

#进入容器去关闭容器

kubectl exec -it always-pod -- /bin/bash

shutdown.sh

#查看当前状态,可以看到always-pod重启计数器为1

kubectl get po

NAME READY STATUS RESTARTS AGE

always-pod 1/1 Running 1 (5s ago) 70s

#测试never策略,创建never.yaml

cat > never.yaml << EOF

apiVersion: v1

kind: Pod

metadata:

name: never-pod

namespace: default

spec:

restartPolicy: Never

containers:

- name: test-pod

image: docker.io/library/tomcat

imagePullPolicy: IfNotPresent

EOF

kubectl apply -f never.yaml

kubectl exec -it never-pod -- /bin/bash

shutdown.sh

#不会重启,状态为completed

kubectl get pods | grep never

never-pod 0/1 Completed 0 73s

#测试OnFailure策略,创建onfailure.yaml

cat > onfailure.yaml << EOF

apiVersion: v1

kind: Pod

metadata:

name: onfailure-pod

namespace: default

spec:

restartPolicy: OnFailure

containers:

- name: test-pod

image: docker.io/library/tomcat

imagePullPolicy: IfNotPresent

EOF

kubectl apply -f onfailure.yaml

#进去后进行异常退出

kubectl exec -it onfailure-pod -- /bin/bash

kill 1

#查看pods状态,已经重启

kubectl get po | grep onfailure

onfailure-pod 1/1 Running 1 (43s ago) 2m11s

#进入后进行正常退出

kubectl exec -it onfailure-pod -- /bin/bash

shutdown.sh

#查看pods状态,没有重启,进入completed状态

kubectl get po | grep onfailure

onfailure-pod 0/1 Completed 1 3m58s

#清理环境

kubectl delete -f always.yaml

kubectl delete -f never.yaml

kubectl delete -f onfailure.yaml

原文地址:https://blog.csdn.net/qq_72569959/article/details/135614405

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。

如若转载,请注明出处:http://www.7code.cn/show_58176.html

如若内容造成侵权/违法违规/事实不符,请联系代码007邮箱:suwngjj01@126.com进行投诉反馈,一经查实,立即删除!